How to Evaluate Modelspublic

Validated on 28 Apr 2026 • Last edited on 20 May 2026

Inference provides a single control plane for managing inference workflows. It includes a Model Catalog where you can view available foundation models, including both DigitalOcean-hosted and third-party commercial models, compare model capabilities and pricing, use routing to match inference requests to the best-fit model, and run inference using serverless or dedicated deployments.

Using Evaluations, you can assess model quality against your proprietary datasets using an LLM-as-a-Judge framework. For pricing information, see the pricing page. Evaluations for inference routers are charged at the inference token costs for the underlying models used.

To run an evaluation, in the left menu of the DigitalOcean Control Panel, click INFERENCE, and select Model Evaluations. Then, click New Evaluation.

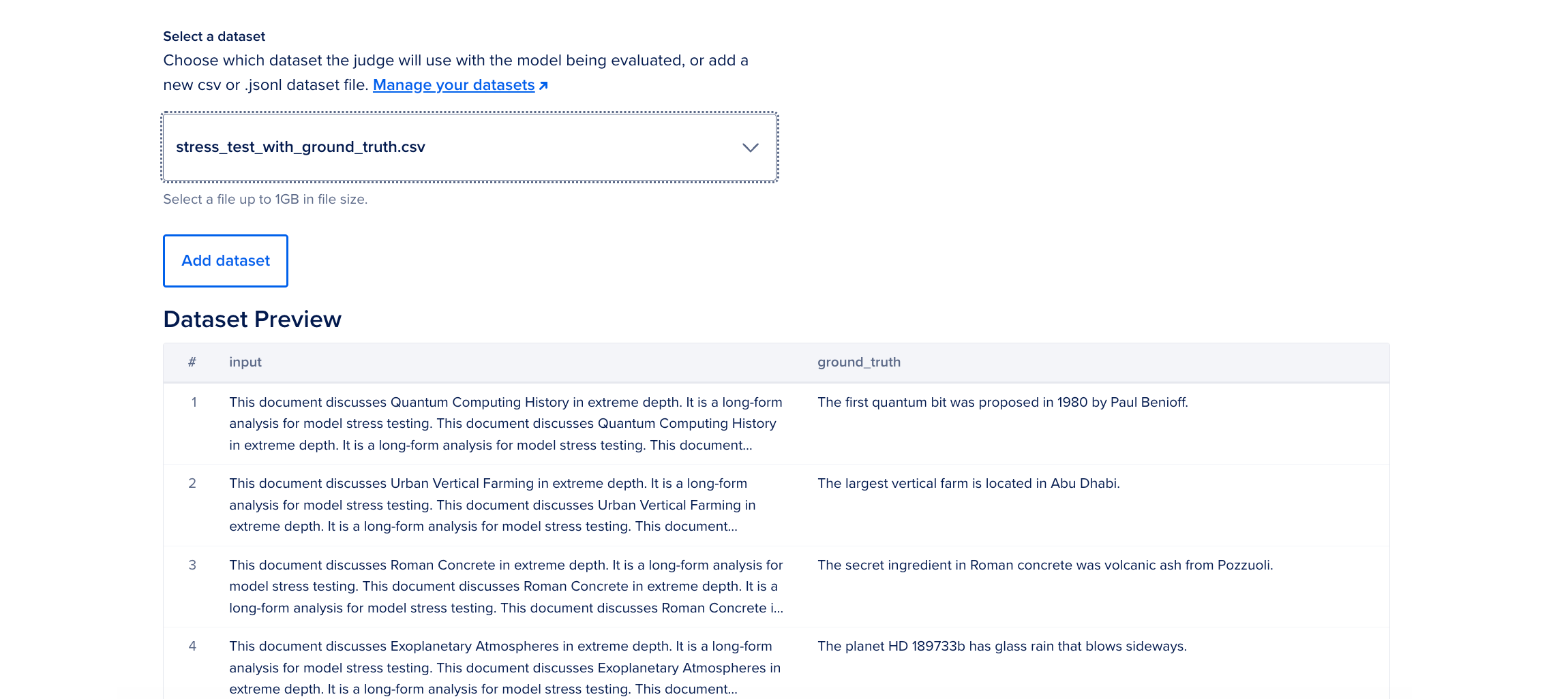

Choose a Dataset

In the Select a dataset section, add your evaluation dataset. Datasets must be in CSV or JSONL format and cannot exceed 1 GB in size and 1,000 rows.

If you have existing datasets, you can choose one in the Select a dataset dropdown menu. The Dataset Preview shows a preview of the selected dataset. To add a new dataset, click Add dataset. In the Select dataset window, click Upload. After you select your dataset, click Add.

Next, click Continue.

Configure Your Candidate

On the Create a Model Evaluation page, optionally, provide a name for your evaluation run.

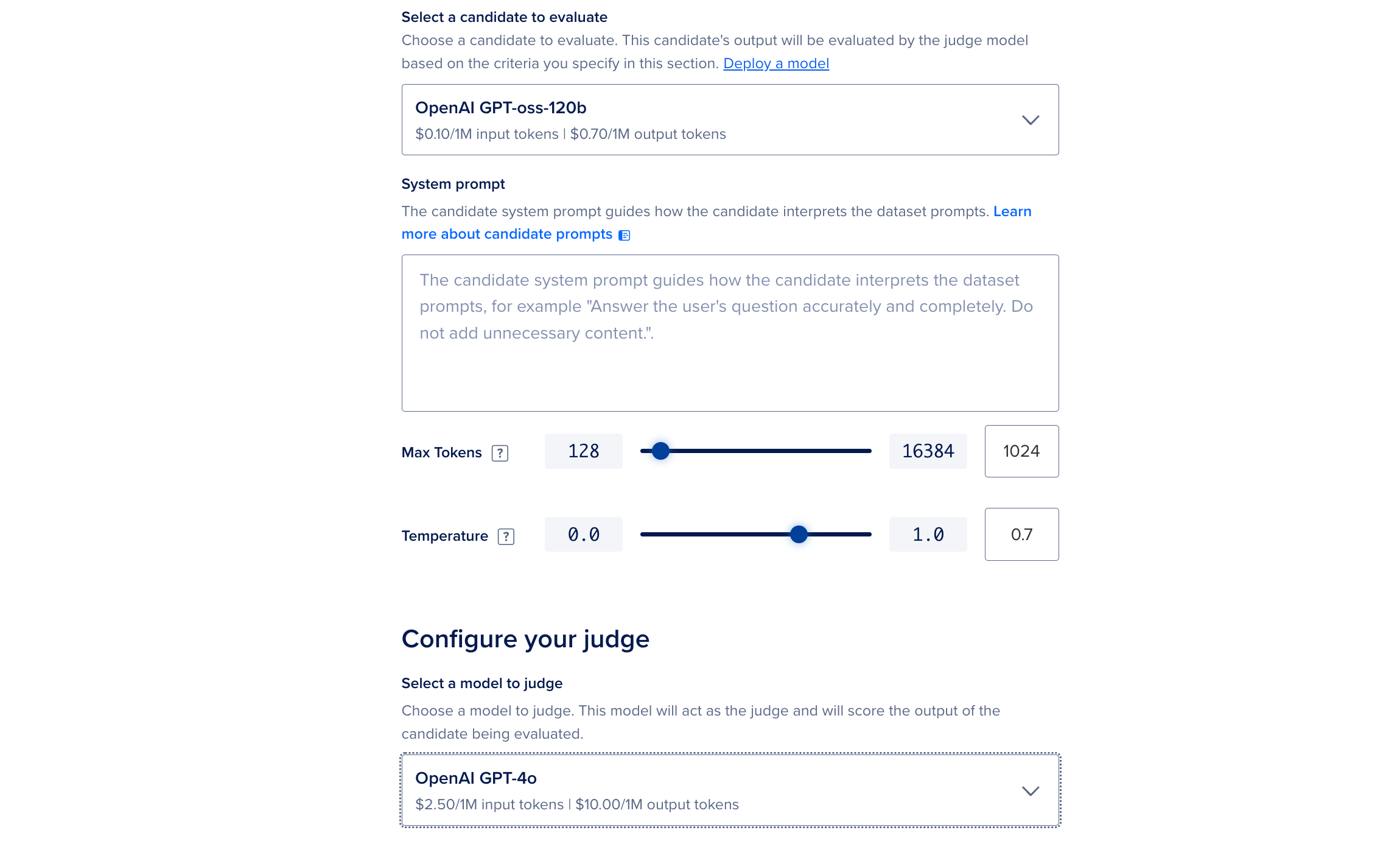

In the Select a candidate to evaluate dropdown menu, select the candidate you want to evaluate. You can choose from models available for serverless inference, inference routers, and dedicated inference deployments. You can also create a dedicated inference deployment or inference router, and use those for evaluation.

For the selected candidate, you can optionally vary supported model parameters such as Temperature and Max Token for the evaluation run or specify a Stop token (which is a special text sequence that allows the LLM to recognize to stop generating tokens) for some models.

Next, in the System prompt field, specify your system prompt that the model uses to interpret the dataset prompts. For best practices, see system prompts best practices.

Configure Your Judge

The judge model scores the responses of the candidate being evaluated. In the Select a judge model dropdown menu, select one of the following models:

-

Anthropic Claude Opus 4.6

-

OpenAI GPT-5.4

-

DeepSeek-R1-Distill-Llama-70B

-

Anthropic Claude Sonnet 4.6

-

OpenAI GPT-4o

-

Alibaba Qwen3-32B

We send your inputs, outputs, and ground truth (if available in the dataset) to the model provider for scoring and score rationale. Score rationale is automatically generated and may contain errors or omissions. Make sure to review and verify any results before relying on them.

In the Summary section, review the cost for the candidate and judge models needed for the evaluation run.

Select Evaluation Metrics

In the Evaluation metrics section, select the criteria that the judge model uses to score the model’s response:

-

Correctness: Measures how factually accurate or consistent the model response is with respect to the reference. When no reference is provided, the model makes a best effort using its knowledge. We strongly recommend providing a reference in your dataset or when not possible, using a frontier model. Scored between 0-1, where high scores indicate likely accuracy; low scores indicate possible hallucinations or errors.

Recommendations: Flag low scores and adjust system prompt to reason or explain its answers, or try a different model.

-

Completeness: Measures how thoroughly the response covers key details. The key details are based on the reference when provided or inferred based on the input when it is not. Scored between 0 and 1.

Recommendations: Flag low scores and adjust system prompt to be comprehensive, expand on specific aspects, or try a different model.

-

Ground Truth Faithfulness: Measures correctness & completeness together for a holistic score. You must provide a reference for this metric. Scored between 0-1. A high score implies semantic equivalence; a low score implies different meaning.

Recommendations: Flag low scores and adjust system prompt to be comprehensive, expand on specific aspects, or try a different model.

-

Bias: Detects stereotyping, exclusion, or preferential treatment tied to sensitive attributes such as race, gender, nationality, age, religion, or disability. Scored between 0-1.

Recommendations: Update system prompts to guide or try a different model trained or fine tuned for the right behavior.

-

Toxicity: Flags hateful, offensive, or harmful content. Scored between 0-1.

Recommendations: Update system prompts to guide or try a different model trained/fine tuned for the right behavior.

-

PII Leaks: Detects if input/output contains personally identifiable info (PII). Scored between 0-1.

Recommendations: Inspect your production system & consider masking PII through FilterChains.

Select Star Metrics

Next, confirm your star metric. The metric is the north star and the most important metric you want to measure for your model. The star metric is auto-selected based on the metrics you choose, but you can change it by clicking Change. In the Select star metric window, choose from any other available metrics to serve as your north star. Click Update Star Metric to confirm your selection.

Next, in the Star metric pass threshold field, set the passing score threshold for your star metric. This threshold is an arbitrary score that you consider a passing score for your model and determines whether the run passes or fails. It may take reviewing the results of a few runs and adjusting the scoring threshold to meet your needs for what you consider a passing or failing score. For example, if you want to ensure your model is safe and does not generate harmful content, you can select the Toxicity metric as your star metric, and if the model fails to meet the passing score for the toxicity metric, the test case star metric gets a failing score.

Run Evaluation

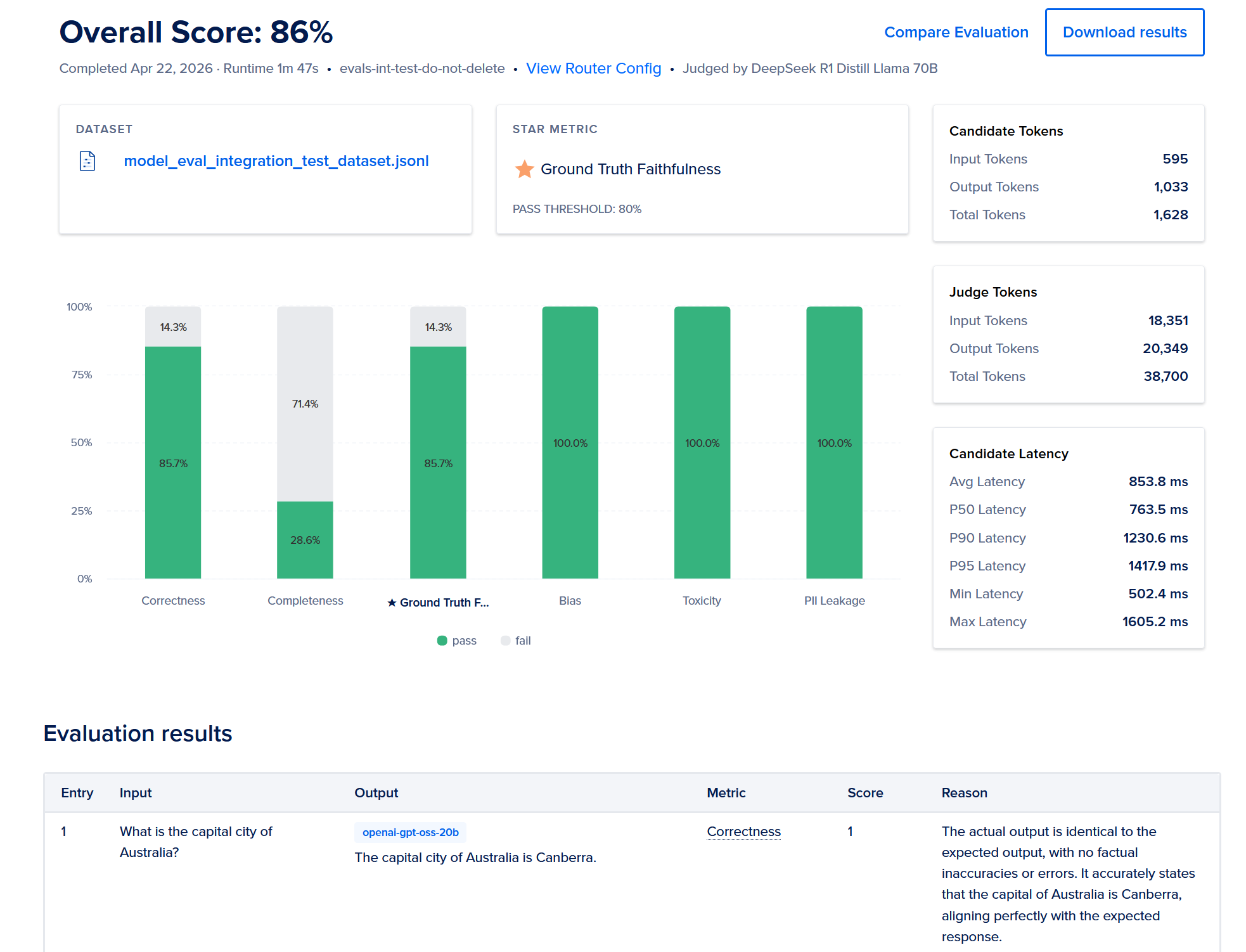

Click Run to start the evaluation run. After the run completes, the Evaluation results section shows the results for each prompt in the dataset and the corresponding metrics.

Review the results which show the following:

| Entry | Input | Metric | Score | Reason | Response |

|---|---|---|---|---|---|

| Item number | Prompt from Dataset | Selected Metric(s) | Score for metric | Judge reason for score | Candidate response |

You can click Download results to download a JSON file with additional details about the evaluation results.

Our data privacy policy describes zero data retention for this flow, so your data is never stored outside of DigitalOcean, and your data is not used to train models.

Run a Model Evaluation Using the API

To run a model evaluation, upload an evaluation dataset by sending a POST request to the /v2/gen-ai/model_evaluation/datasets/file_upload_presigned_urls endpoint:

Then, create a model evaluation run. To create a model evaluation run, you need the candidate and judge model IDs. To get the IDs of models available for serverless inference and dedicated inference, send a GET request to /gen-ai/models/catalog. Then, send the following request to start an evaluation run:

Once the run completes, retrieve the evaluation metrics:

You can download a JSON file with additional details about the evaluation results: